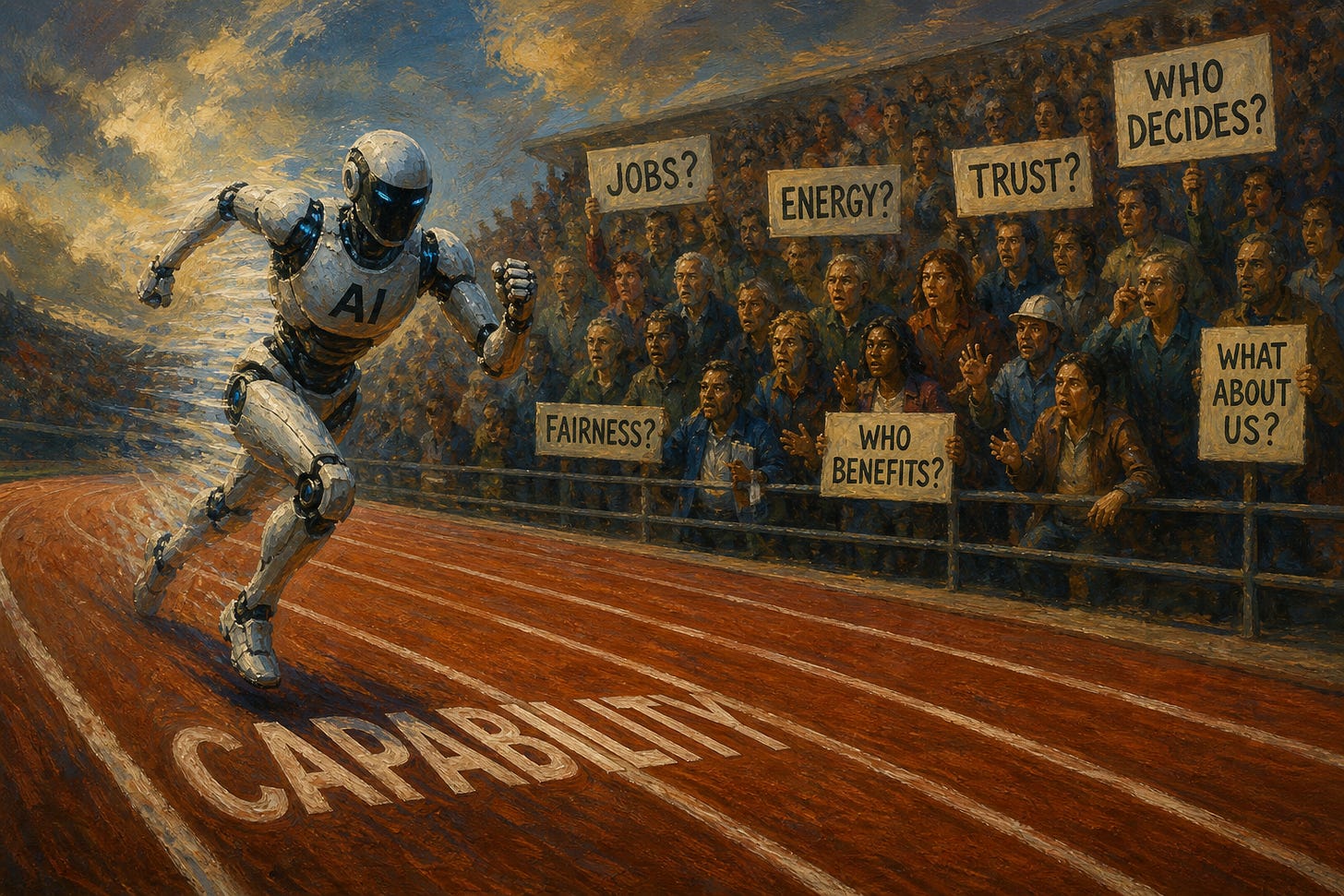

AI has a PR problem

The industry is winning the model race and losing the trust race

I am an AI optimist. I think it will be one of the defining technologies of our lifetime. I also believe the industry is badly misreading the room.

Inside the Valley, the conversation still sounds like 2023: more powerful models, better benchmarks, faster agents, cheaper inference, longer context windows. But outside the Valley, the story has moved on. The public is not asking whether AI can write code, pass exams, generate videos, or answer emails. Increasingly, it is asking a more basic question: Who asked for this?

There is a growing sense that AI is being imposed on the public by a small group of companies, backed by enormous capital, consuming enormous energy, trained on other people’s work, threatening other people’s jobs, and sold back to society as inevitable. This is a bad way to introduce a general-purpose technology to a democracy.

Evan Spiegel, hardly an anti-tech populist, recently warned that technology leaders are underestimating the coming societal backlash against AI. Even Sam Altman acknowledged at BlackRock’s U.S. Infrastructure Summit in Washington, D.C. that “AI is not very popular in the U.S. right now.”

The polling is worse than the vibes. In a March 2026 NBC News poll of 1,000 registered voters, only 26% of voters had a positive view of AI, while 46%had a negative view. AI’s net image was -20, slightly worse than ICE, which registered 38% positive / 56% negative, or -18. The only items in the poll with worse net images were the Democratic Party and Iran.

For context, this means the industry has taken a technology that can help cure disease, tutor children, defend networks, accelerate science, and make millions of people more productive, and somehow branded it in the neighborhood of deportation raids and geopolitical enemies. That takes work.

The industry’s mistake has been to assume utility equals legitimacy. It does not. A technology can be useful and resented. It can be adopted and distrusted. It can be everywhere and still feel like an intrusion.

That is exactly where AI appears to be heading. The same NBC poll found that 56% of voters had used an AI platform such as ChatGPT, Microsoft Copilot, or Google Gemini in the prior 2-3 months. But 57% said the risks of AI outweigh the benefits, versus only 34% who said the benefits outweigh the risks. That is the paradox: people are using AI, but they do not feel good about it.

Some of it is economic. AI entered the workplace wrapped in a threat: use this, or be replaced by someone who does. For two years, the industry’s loudest voices made the same argument in different forms: entire categories of work will disappear, white-collar labor is next, agents will do what junior employees do, one-person companies will replace teams, everyone needs to adapt. Even when framed as optimism, the message landed as menace.

Then, when people reacted badly to being told they were economically disposable, the language softened. We love our users. AI will augment people. The future will be abundant. Workers will be more creative. Everyone will have a superpower. But people had already heard the first part.

You cannot tell people they are economically obsolete on Monday and expect them to applaud your product launch on Tuesday.

The backlash is also physical. Maine’s Legislature recently passed what Maine Public described as a first-in-the-nation temporary ban on large data centers, aimed at projects using more than 20 megawatts of power. The debate centered on electricity, water, environmental impact, local control, and whether communities were prepared for AI-scale infrastructure.

AI has gone from a software story to a land-use story. And land-use politics are much harder. People may tolerate an app update they dislike. They are much less tolerant of a 20-megawatt facility down the road that they believe could raise power prices, strain water resources, or alter the character of their town.

The backlash is also personal. In April 2026, WIRED reported that a suspect allegedly threw an incendiary device toward Sam Altman’s residence and then made threats outside OpenAI’s headquarters. No one was injured, and the facts should be treated carefully; political violence is indefensible, and an individual attack should not be conflated with normal public criticism. But symbolically, the incident shows how intensely AI leaders are being personalized as avatars of a future many people feel powerless to shape.

That personalization is dangerous for everyone. It is dangerous for executives and employees. It is dangerous for democratic debate. It is dangerous for the public, because rage at individuals can substitute for serious governance of systems.

The core of AI’s image problem is that the industry has accumulated institutional power without building institutional trust.

The backlash feels strange to technologists because from the inside, AI looks like abundance: more intelligence, more creativity, more productivity, more speed. From the outside, it can look like extraction: my work was scraped, my job is at risk, my power grid is strained, my kid is addicted, my feed is polluted, my trust is gone, and the people doing it are becoming billionaires.

That does not mean we should stop building. The United States cannot afford to stand still while other countries are investing aggressively. AI leadership will matter for national security, economic competitiveness, cyber defense, scientific capability, manufacturing, energy, and the next generation of software and hardware companies. Retreating from AI development would cede the frontier to others with different values, different governance models, and different commitments to openness.

A democratic society should want the world’s most important AI systems to be built in democratic countries. But that argument only works if democratic countries act democratically. That means taking people with us.

The AI industry’s challenge is not merely to build better models. It is to build a better social contract around those models. If the industry wants legitimacy, it has to change the conversation.

First, stop selling AI as inevitability. The word “inevitable” is politically combustible. It tells people their consent is irrelevant and makes democratic governance sound like a speed bump, inviting exactly the kind of backlash the industry now fears.

Second, be honest about tradeoffs. AI will create jobs and eliminate jobs. It will empower workers and displace some workers. The public does not need a fairy tale. It needs candor.

Third, make the benefits visible and local. Abstract promises about GDP growth will not persuade a nurse, teacher, paralegal, call center worker, graphic designer, or small business owner who feels exposed. Show how AI helps people do their jobs better, not just how it helps companies do more with fewer people. Show how communities benefit from infrastructure, not just how hyperscalers secure power. Show how students, patients, workers, and families gain agency.

Fourth, treat labor transition as a core part of deployment, not a philanthropic afterthought. If AI is going to reshape work, the industry needs to invest in training, mobility, and new career pathways. Not vague “reskilling” slogans. Real bridges from the jobs AI changes to the jobs AI creates.

Fifth, take infrastructure politics seriously. AI is not just software anymore. It is data centers, transmission lines, energy contracts, water usage, chips, cooling systems, and local permitting. Communities are not wrong to ask what they are getting in return.

Sixth, build trust into the product. Users need to know when AI is being used, what data is involved, what the system can and cannot do, and who is accountable when it fails. Disclosure, provenance, privacy controls, auditability, and safety are adoption accelerants.

Finally, the industry needs a more human vocabulary. The public does not want to be “disrupted.” People do not want to be “abstracted away.” Their jobs are not merely “tasks.” Their creative work is not merely “data.” Their communities are not merely “sites.” Their children are not merely “users.” Their concerns are not merely “sentiment.”

Language matters because it reveals the mental model. And too often, AI’s mental model of society sounds like an optimization problem.

AI companies are building technology that could genuinely improve human life. But they are often communicating in ways that make people feel minimized by it. The path forward is not acceleration at all costs or precaution at all costs. It is democratic acceleration.

Move fast, but explain where we are going. Build boldly, but share the upside. Compete globally, but listen locally. Automate tasks, but invest in people. Scale infrastructure, but earn community trust.

That is the burden of building a general-purpose technology in a democracy. You do not just need technical permission from the laws of physics and capital markets. You need social permission from the people whose lives will change.

There is a much more fundamental question, and it would be great for the titans of the AI industry to address this question honestly. It's this: What are the deep costs to human life of adopting this technology? I think this is ultimately what worries people the most. It's certainly what worries me the most.

I'm literally automating a workflow right now with Claude Code, and reading this while Claude works. When I'm done here I'll have reduced a 2-hour weekly slog to a few minutes. And the actual process of working with Claude to build this is so fun – it feels like magic!

So I love AI, and I'm also terrified of it, because it's really addictive.

When I see people I've known for years doing things they would never have done before, because now it's easy for them to do with the help of AI – I first think, great! Then a moment later I wonder what opportunities for personal growth they've now permanently discarded in favor of easy results. (and if I'm honest, the results often aren't that great...)

Saanya, this was brilliant. Articulated everything I've been seeing flying between SF and middle America to work with Oil and Gas to reduce emissions (an oil and water convo right out of the gate). Silicon Valley is such a bubble. We reinforce our own biases and skip testing them with the masses because it feels inconvenient or hard or wrong because it's not our bubble speak.

We can't disregard the impact this has on individuals. The Frontier Labs need smarter ways to show people that AI is their personal win, not their risk. That's demand creation, a skill we leveraged a ton in cleantech and often one people don't realize they need. I don't see the industry hiring for this very much. They haven't felt the need to because of the fast-paced adoption they experienced, but they're coming up on that wall.

Imagine if the whole world was actually pro-AI. Data centers get built faster and with cleaner energy because communities want them. The user base grows faster. Corporate adoption accelerates. All of that is on the table if they stop forcing it and start earning it. It may feel like it takes longer, but it's often a much faster path than the alternative.

I hope they see there's real power in influencing a democracy rather than bulldozing one and real opportunity in adapting their strategy.