Anthropic’s Compute Speedrun

Anthropic is turning its biggest weakness into a procurement roadmap, stitching together hyperscalers, chipmakers, and even SpaceX to solve compute scarcity

OpenAI spent weeks needling Anthropic on the exact vulnerability users could feel: rate limits, degraded availability, compute constraints, the sense that Claude was powerful but rationed. That was a good competitive vector because it was tangible - users did not need a benchmark chart, they could feel the wall.

For a while, this was one of the cleanest knocks against Anthropic. OpenAI could position itself as the lab with scale, availability, and user love, while Anthropic looked like the careful safety shop that had built a great model and then parked it behind a velvet rope.

Then Anthropic went shopping. In a matter of weeks, they have lined up Google, Amazon, and now SpaceX. The lab that was supposedly too cautious on compute is now assembling one of the most aggressive infrastructure portfolios in AI.

The irony is that Anthropic may not have been timid so much as ambushed by its own success. Dario Amodei has talked about pushing for 20x or 30x annual growth instead of 10x, but Anthropic reportedly saw Q1 revenue and usage grow 80x year-over-year. At that speed, capacity planning becomes disaster response.

You can be prudent at 10x growth. At 80x, you start calling everyone with a data center, including Elon Musk.

The Great Hyperscaler Entanglement

Amazon x Anthropic. Anthropic committed up to $100 billion over the next 10 years to AWS, securing up to 5GW of new capacity to train and run Claude. The deal includes Amazon’s custom silicon as well as good old-fashioned Nvidia GPUs.

At the same time, Amazon has invested $5 billion in Anthropic, with up to another $20 billion potentially coming later. That builds on the $8 billion Amazon had already invested.

Google x Anthropic. The Google deal is even more fascinating because Google is both Anthropic’s infrastructure provider and one of its most direct model competitors.

Anthropic has committed to spend $200 billion with Google Cloud over five years for multiple gigawatts of next-generation TPU capacity starting in 2027. That deal alone reportedly represents more than 40% of Google Cloud’s disclosed revenue backlog and helped push Alphabet closer to Nvidia’s market-cap throne. Alphabet is also investing up to $40 billion in Anthropic, deepening their partnership.

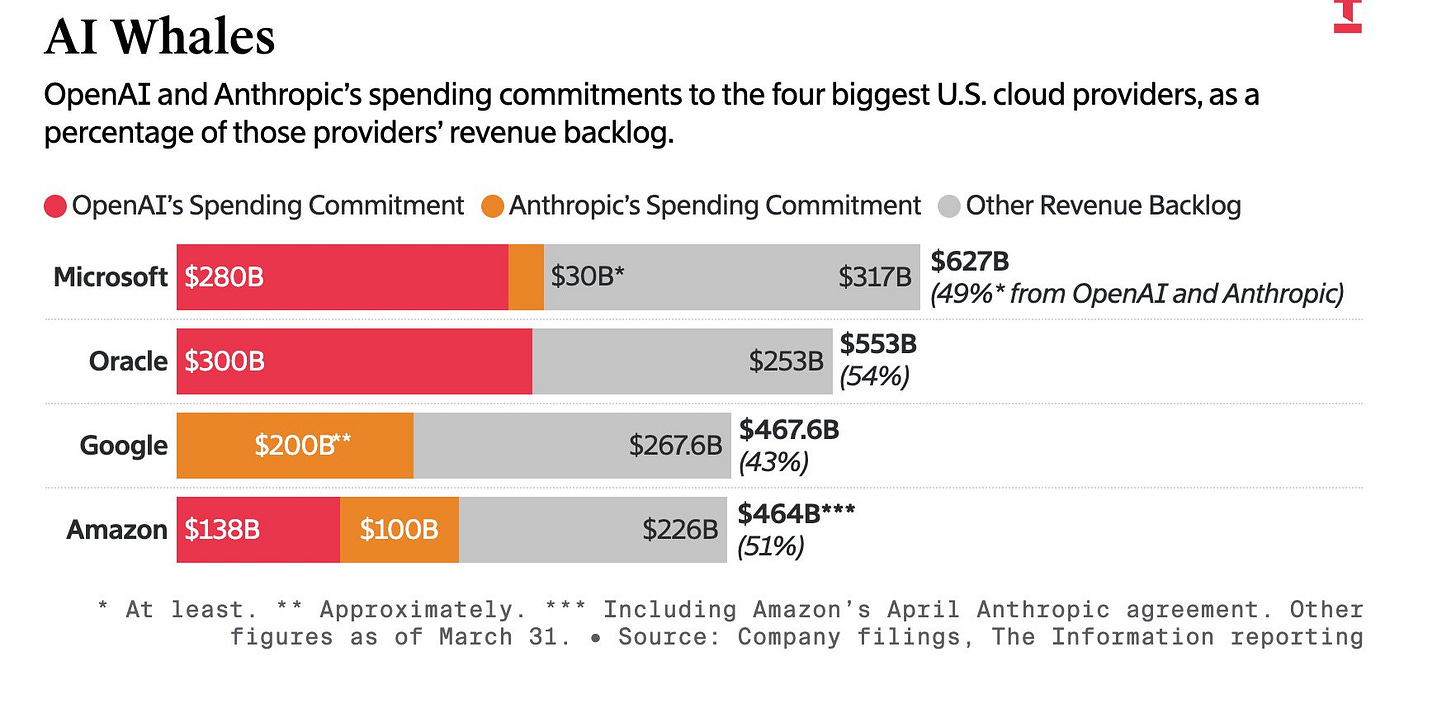

A chart from The Information captures the scale of this entanglement. OpenAI and Anthropic are becoming structural pillars of hyperscaler backlog. The biggest cloud companies in the world are increasingly underwriting the frontier labs, while the frontier labs are increasingly underwriting the cloud companies’ future revenue.

That is staggering, but it should not be misread as purely circular AI accounting.

I would worry about this setup much more if the customer demand at the other end were not so obvious. Anthropic grew usage 80x YoY in Q1 2026. That is why it needs compute. Claude Code is not being rate-limited because Google, Amazon, and Microsoft are trying to manufacture demand in a spreadsheet. It is being rate-limited because customers are using the product faster than Anthropic can provision capacity.

The hyperscaler financing is better understood as opportunistic participation in upside they can already see. If you are Google, Amazon, or Microsoft, you do not want to watch a frontier model company turn into one of the fastest-growing enterprise software businesses in history and merely send it invoices. You want the equity, the cloud spend, the infrastructure influence, and the strategic dependency.

So yes, the entanglement matters. The suppliers are investors. The competitors are landlords. The whole thing has the clean lines of a bowl of spaghetti. But the center of gravity is still real demand. The backlog is not just a financial artifact, but the physical shadow cast by demand for intelligence.

The Colossal Surprise

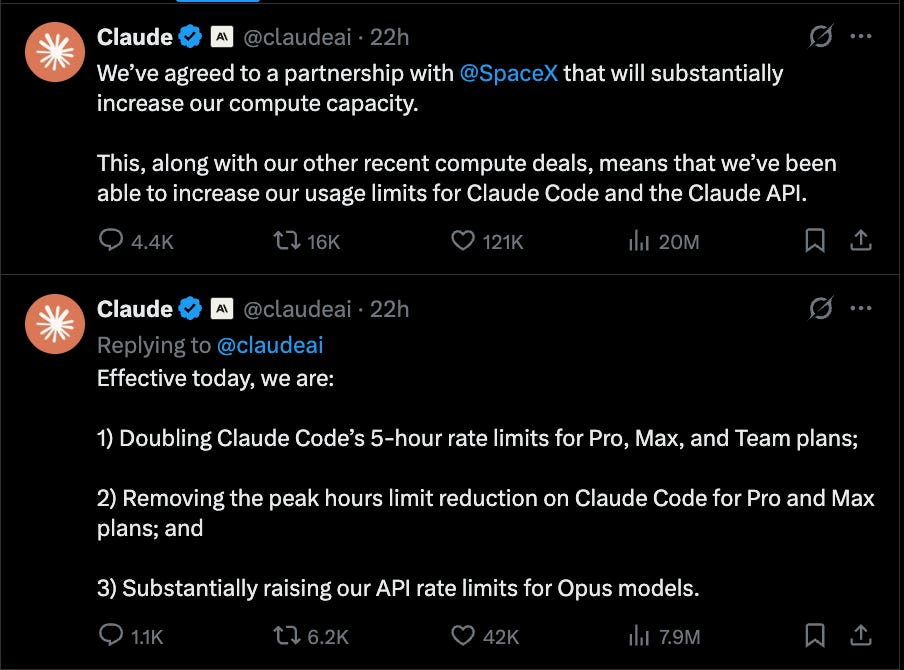

Spacex x Anthropic. Anthropic will use all of the compute capacity at Colossus 1, giving it access to more than 300 MW of new capacity and over 220,000 Nvidia GPUs within the month.

Anthropic says this is already allowing it to double Claude Code limits, remove peak-hour reductions for Pro and Max users, and raise Opus API limits.

The SpaceX deal is the funniest and most revealing one.

Elon Musk, who was recently dunking on Anthropic, is now renting it compute. SpaceX gets a marquee AI customer ahead of a potential IPO. Anthropic gets badly needed capacity. Nvidia gets another proof point that demand for GPUs remains feral. Everyone gets to pretend this was always the plan.

The clean “Anthropic = AWS” mental model is now dead.

Anthropic is buying every credible form of compute it can get: AWS Trainium, Google TPUs, Nvidia GPUs, Azure capacity, custom Fluidstack data centers, and now SpaceX’s Colossus 1. That tells us a few things.

First, compute scarcity is still real. If demand were easy to serve, Anthropic would not be stitching together capacity from half the industrial economy.

Second, Anthropic is deliberately reducing dependency risk. At frontier scale, relying too heavily on one cloud, one chip architecture, or one partner is a strategic vulnerability. Redundancy is survival.

Third, the hyperscalers are no longer just cloud vendors. They are becoming the kingmakers, financiers, landlords, and toll collectors of the AI economy. The model labs may get the product headlines, but the cloud providers are turning those headlines into backlog.

And fourth, OpenAI’s critique was not wrong. Anthropic did need more compute. The mistake was assuming that compute scarcity would remain a durable point of differentiation.

Anthropic appears to have taken the criticism as product feedback and responded with the most absurd compute procurement speedrun imaginable.

The AI race is still about model quality. But increasingly, model quality only matters if you can serve it, scale it, and keep it available when customers actually need to work.

really helpful framing to make sense of what is going on.

this is cool, we saw the same thing - anthropic publishing about google + amazon + spacex deals showing up in our briefings the same week, classic compute-scarcity-as-moat signal we keep tracking across 600+ VC newsletters