Google Keeps Going

Three launches this week show how Google’s ecosystem strategy is compounding

As the world debates ChatGPT vs Claude, the vertically-integrated cash-printing AI behemoth that is Google keeps embedding AI deeper into its ecosystem, pushing the frontier of research, and steadily taking market share.

Fresh Similarweb data today captured the slow creep. Over the last two months, Gemini’s share of AI traffic has risen from 20.7% to 24.4%, while OpenAI’s dominance has compressed from 65.8% to 61.7%. A reminder that patience and distribution invariably compound.

Let’s take a look at 3 key announcements coming out of Camp Google this week:

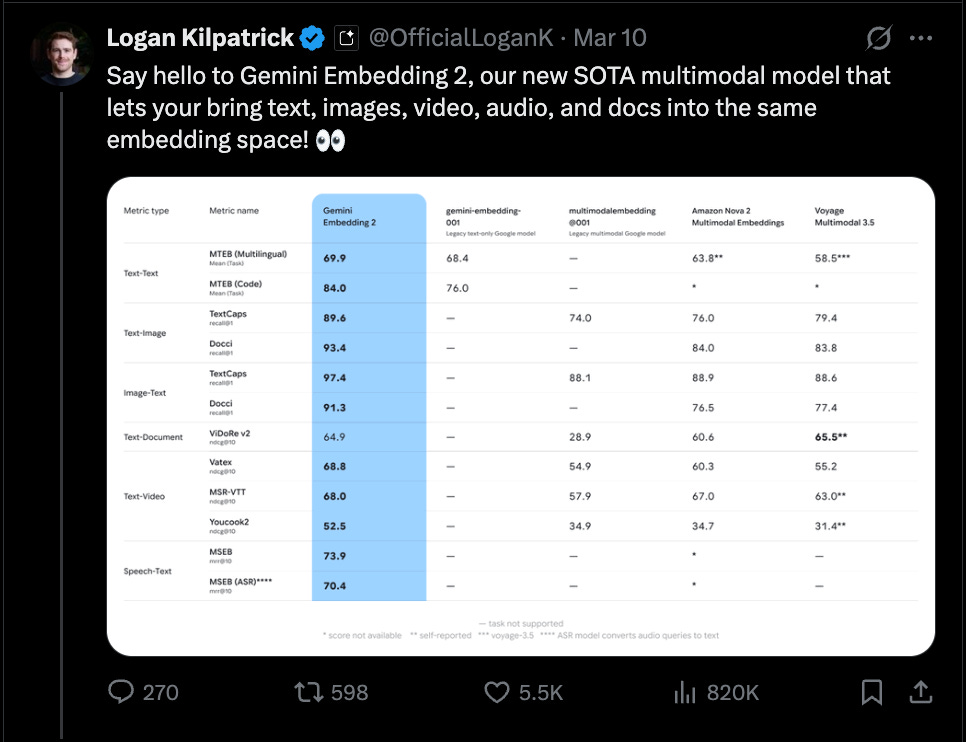

Gemini Embedding 2

Most of the attention in AI goes to generative models, but one of the most important layers in the stack is occupied by embedding models. An embedding model converts data (text, images, audio, video) into numerical vectors that capture semantic meaning. In practice, this is what powers:

semantic search

recommendation engines

clustering/classification

retrieval systems for LLMs (RAG)

In other words: embeddings are how AI systems understand large datasets.Google’s new Gemini Embedding 2 model is notable because it creates a unified embedding space across modalities. Text, images, video, audio, PDFs - all mapped into the same vector representation.

That unlocks things like searching a video library using a text query, retrieving documents based on an image, and clustering multimodal datasets in a single index. It’s infrastructure for multimodal knowledge systems. Not flashy, but incredibly foundational.

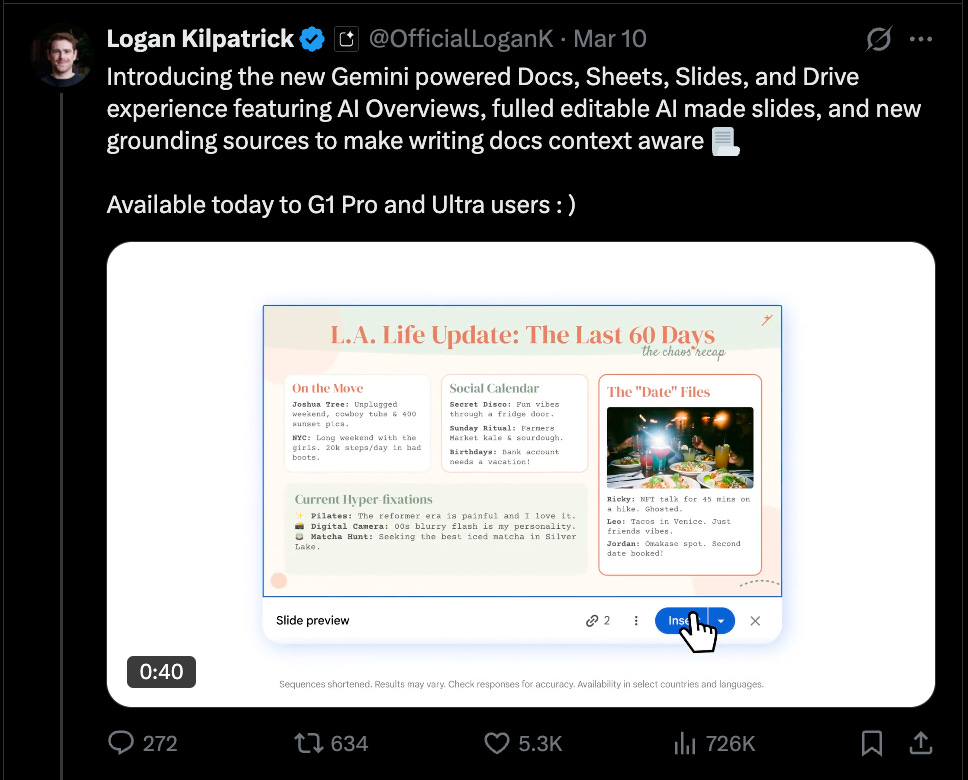

Gemini in the Productivity Stack

Google also announced deeper Gemini integration across Workspace. Gemini can now pull context across Docs, Sheets, Slides, Gmail, and Drive to generate outputs.

For example, say you ask Gemini to “create a Q3 strategy doc using the marketing plan in Drive and the sales numbers from Sheets”. It can now pull the files, extract relevant data, and draft a formatted document. Talk about AI-native document workflows.

Competitors can build similar capabilities but Google’s advantage here is practical, not technical. If you use Workspace, all your data already lives there. No connectors, no uploads, no brittle integrations needed. It is a much better, lower friction product experience to take intelligence to your data than to pipe data into the intelligence.

Other interesting upgrades include AI-native search across Drive, natural-language spreadsheet creation in sheets, automated slide creation in Slides. The interface increasingly becomes: “Describe what you want to produce.”Gemini in Google Maps

Today, Google launched “Ask Maps.” Instead of typing queries like a search engine, you can now ask conversational questions like:

“My phone is dying — where can I charge it without having to wait in a long line for coffee?”

“Is there a public tennis court with lights on that I can play at tonight?”

“I’m headed to the Grand Canyon, Horseshoe Bend and Coral Dunes — any recommended stops along the way?”

Gemini interprets intent and generates recommendations using Maps’ location data plus your preferences and behavior.

Alongside it, Google introduced Immersive Navigation - a major visual overhaul with 3D buildings, lane markers, terrain rendering, and contextual traffic information.

Google Maps has ~2B+ users globally. Embedding AI directly into that product turns Maps into something closer to a real-world AI interface.

This is impressive shipping velocity for a $3.7T company.

Jensen Huang recently described AI as a five-layer cake:

Energy → chips → infrastructure → models → applications.

Most companies pick one or two layers to compete in. Nvidia sells chips. AWS builds infrastructure. OpenAI builds models. Startups try to build applications. Google is present in all five.

The AI race might look like model vs model today but increasingly it’s becoming ecosystem vs ecosystem. And Google has been building its ecosystem for twenty-five years.