🧾 Weekly Wrap Sheet (05/08/2026): Memory, Mistrust, Middlemen, Megawatts & Memes

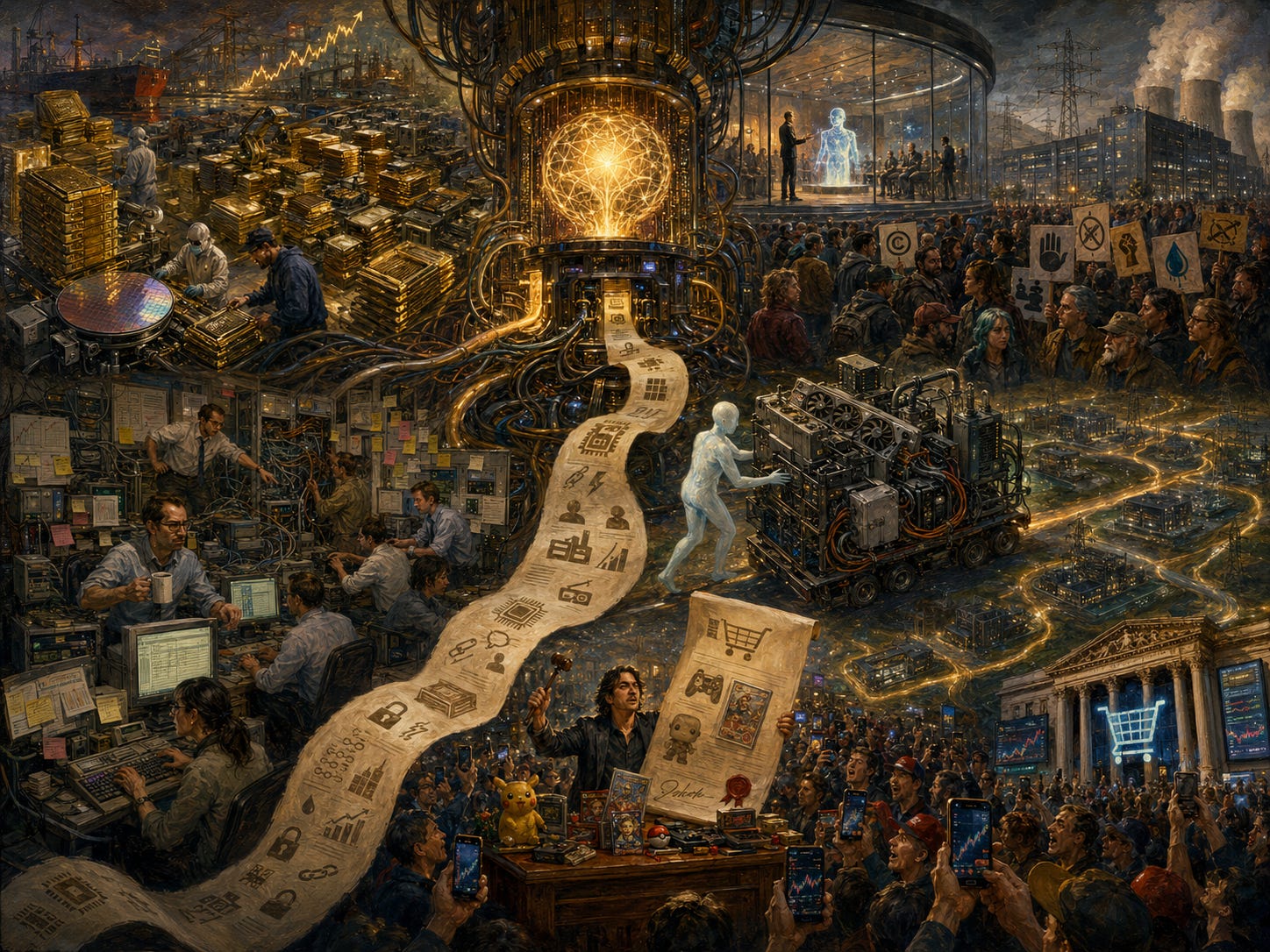

Memory becomes the bottleneck, AI meets public backlash, PE bets big on deployment, Anthropic goes infra shopping, and GameStop turns a takeover into performance art

🎬 TL;DR

AI demand has turned HBM from a cyclical commodity into a strategic choke point. The bull case is scarcity. The bear case is that every memory supercycle eventually discovers supply.

AI has a social contract problem. The industry sees abundance. People outside see extraction: scraped work, threatened jobs, strained grids, and billionaires explaining inevitability.

OpenAI and Anthropic are building PE-backed deployment arms because enterprise AI still requires consultants, integrations, permissions, and one cursed spreadsheet.

Anthropic answered OpenAI’s compute taunts with an infra shopping spree: AWS, Google, SpaceX, TPUs, GPUs, Trainium, Colossus. Rate limits up, outages down.

GameStop’s eBay bid was capital markets as content. Ryan Cohen understands that attention is leverage. Unfortunately, eBay shareholders are still old-fashioned enough to ask where the money is coming from.

🧠 Memory Becomes the Choke Point

If you’ve looked at public markets recently, you’ve seen memory stocks - Micron Technology, SK Hynix, Samsung Semiconductor - absolutely ripping. So why is the market suddenly excited about memory?

AI is a memory problem

An LLM is basically a giant pile of numbers. Generating a token means moving and multiplying huge matrices. The math is simple; the scale isn’t. Memory keeps the accelerator fed.

Training is brutally memory-intensive: weights, gradients, activations, optimizer states. If bandwidth is too low, GPUs stall.

Inference sounds lighter but it isn't. The culprit is the key-value cache. Models store prior attention state to avoid recomputation. Longer context = bigger cache. Long conversations, codebases, documents, agents - all increase memory pressure. “Context length” is really a memory bill.

Every product trend the AI industry is excited about - longer context, more reasoning, more agents, and more concurrent users - adds memory pressure.HBM turned memory from commodity into choke point

Quick refresher:

DRAM → fast working memory

NAND → persistent storage

AI runs on HBM: stacked DRAM next to the GPU with massive parallel access.

If DRAM is a warehouse across town, HBM is a warehouse bolted onto the factory, with 1,000 loading docks. Historically, memory was cyclical: prices up → supply floods in → prices crash → repeat. AI breaks that.Supply is constrained and getting tighter

HBM is harder to make, more wafer-intensive, and slower to scale. So when suppliers shift toward HBM, they steal capacity from DRAM.

That creates a double squeeze:

Direct → AI demand soaks up HBM

Indirect → less supply for everything elsePrices spike → profits explode

Memory has brutal operating leverage.

Revenue = units × price

Costs ≈ fixed (short term)

So when prices move, earnings re-rate violently.

Recently, DRAM prices have spiked +55–60% QoQ and NAND prices +30–40% QoQ. As a result, margins have doubled to ~80% for DRAM, ~60% for NAND. Samsung is on track to out-earn Apple and Google this year.Demand looks less speculative than in prior cycles.

In the old memory cycle, the fear was always that today’s boom was tomorrow’s oversupply. This time, demand is driven by hyperscaler AI capex. They’re locking in supply early, giving vendors pricing power and visibility.

The memory market has shifted from a cyclical commodity market to a supply-constrained, AI-critical oligopoly with pricing power. That is the bull case.

The bear case has history on its side: memory is still memory. Capacity eventually comes online. Demand normalizes. Prices fall. Every cycle looks structural at the top - that’s part of the trick.

The real question: Has AI permanently increased the slope and durability of memory demand? If inference scales, agents stick, and enterprises run persistent AI workflows, memory demand shifts from consumer cycles to the industrialization of intelligence - a much bigger market.

🧯AI Misreads the Room

I am an AI optimist. I think it will be one of the defining technologies of our lifetime. I also believe the industry is badly misreading the room.

Inside the Valley, the conversation still sounds like 2023: bigger models, better benchmarks, longer context windows. But outside, the public is asking a more basic question: Who asked for this?

There is a growing sense that AI is being imposed on the public by a small group of companies, backed by enormous capital, consuming enormous energy, trained on people’s work, threatening people’s jobs, and sold back to society as inevitable.

Evan Spiegel, hardly an anti-tech populist, recently warned that we are underestimating the backlash against AI. Even Altman acknowledged that “AI is not very popular in the U.S. right now.”

The polling is worse than the vibes. In a Mar-26 NBC News poll, only 26% had a positive view of AI, while 46% were negative. AI’s net image was -20, slightly worse than ICE.

We have taken tech that can help cure disease, tutor children, defend networks, accelerate science, and somehow branded it in the neighborhood of deportation raids. That takes work.

Some of it is economic. AI entered the workplace wrapped in a threat: use this, or be replaced by someone who does. For 2 years, the industry’s loudest voices proclaimed that entire categories of work will disappear and everyone must adapt.

Then, when people reacted badly to being told they were economically disposable, the language softened: we love our users, AI will augment people, the future will be abundant. But people had already heard the first part.

You cannot tell people they are economically obsolete on Monday and expect them to applaud your product launch on Tuesday.

The backlash is also physical. Maine’s Legislature passed a first-in-the-nation temporary ban on large data centers. The debate centered on electricity, water, environmental impact, and whether communities were prepared for AI-scale infra.

AI has gone from a software story to a land-use story. People may tolerate an app update they dislike. They are much less tolerant of a 20MW facility down the road.

The backlash is also personal. In Apr-26, a suspect threw an incendiary device toward Sam Altman’s residence. Thankfully, no one was injured. The incident shows how intensely AI leaders are being personalized as avatars of a future people feel powerless to shape.

The backlash feels strange to technologists because from the inside, AI looks like abundance: more intelligence, more creativity, more productivity.

From the outside, it can look like extraction: my work was scraped, my job is at risk, my power grid is strained, my kid is addicted, my feed is polluted, and the people doing it are becoming billionaires.

AI companies are building tech that could genuinely improve human life but often communicate in ways that make people feel minimized by it. The challenge is not merely to build better models, but to build a better social contract.

🛠️ Forward-Deployed Everything

OpenAI and Anthropic are launching AI services JVs, which is a fancy way of saying the future of automated work still requires a lot of very expensive humans. In a very palantir-coded move, both frontier model labs are setting up PE-backed deployment machines to get AI working inside enterprises.

Anthropic announced an enterprise AI services company with Blackstone, Hellman & Friedman, and Goldman Sachs as founding partners contributing ~$1.5B. The mission: help mid-sized companies bring Claude into core operations. Anthropic’s applied AI engineers will work alongside the new company’s teams to find use cases and build custom systems.

OpenAI is reportedly going even bigger. Its new venture, The Deployment Company, is said to be raising $4B from a consortium of 19 PE investors, valuing the entity at ~$10B. Reuters reports it is already in advanced discussions around 3 acquisition targets in AI services.

For two years, the narrative was that frontier AI would make enterprise software simpler, more automated, and less dependent on consultants. But when AI finally arrived at the enterprise, it was greeted by a 17-year-old ERP system, 6 disconnected databases, a security team with veto power, a compliance process moving at geologic speed, and one mission-critical spreadsheet called “FINAL_v7_revised_REAL_final.”

Enterprise AI is often described as a high-margin software business. Deployment still looks suspiciously like labor-intensive, highly skilled services.

The PE angle is the clever part. PE firms own or influence large portfolios of companies under pressure to improve margins, automate workflows, and show AI-driven productivity. The AI labs need distribution. The PE firms need a credible AI transformation story. Everyone benefits.

Some implications.

Services are becoming a control point.

Everyone wanted software margins. The path to those margins may run through services first. It also doubles as market intelligence. Whoever owns the services layer gets a privileged feedback loop and can turn messy customer work into templates, agents and features.AI labs are going around traditional SIs

The obvious threatened incumbents are traditional systems integrators and consulting firms: Accenture, Deloitte, PwC, McKinsey, BCG, and boutique consultancies. Anthropic says its new company will still work alongside existing partners, including its Claude Partner Network. Maybe. But the strategic tension is obvious.This is a pre-IPO story

Both companies are trying to justify enormous valuations. Enterprise revenue quality matters. Consumer AI can be massive, but enterprise AI is where investors look for recurring contracts, high ACVs, budget durability, lower churn, measurable ROI, and strategic lock-in.

The irony is rich: AI was supposed to automate knowledge work. Its next act is hiring knowledge workers to help knowledge workers use tools that automate knowledge work.

⚡ Anthropic’s Compute Glow-Up

OpenAI spent weeks needling Anthropic on the exact vulnerability users could feel: rate limits, compute constraints, the sense that Claude was powerful but rationed.

For a while, this was one of the cleanest knocks against Anthropic. OpenAI could position itself as the lab with scale, availability, and user love, while Anthropic looked like the careful safety shop that had built a great model and parked it behind a velvet rope.

Then Anthropic went shopping. The lab that was too cautious on compute is now assembling one of the most aggressive infra portfolios in AI.

Anthropic may not have been timid so much as ambushed by its own success: Q1 revenue grew 80x YoY. At that speed, capacity planning becomes disaster response. You can be prudent at 10x. At 80x, you call everyone with a data center, including Elon Musk.

Amazon. Anthropic committed up to $100B over 10 years to AWS, securing up to 5GW of capacity.

Amazon invested $5B in Anthropic, with up to $20B potentially coming later. That builds on its prior $8B.

Google. This deal is even more fascinating because Google is both Anthropic’s infra provider and one of its most direct model competitors.

Anthropic committed $200B over 5 years to GCP. That deal alone represents 40%+ of GCP's backlog and helped push Alphabet closer to Nvidia’s market-cap throne. Alphabet is also investing up to $40B in Anthropic.

SpaceX. This is the funniest and most revealing deal. Musk, who was recently dunking on Anthropic, is now renting it compute. Anthropic will use all the compute capacity at Colossus 1, giving it 300 MW and 220K GPUs. This is already allowing it to double Claude Code limits, remove peak-hour reductions, and raise Opus API limits.

SpaceX gets a marquee AI customer ahead of IPO. Anthropic gets much needed capacity. Nvidia gets another proof point that GPU demand remains feral. Everyone gets to pretend this was always the plan.

The clean “Anthropic = AWS” mental model is dead. Anthropic is buying every credible form of compute it can get: AWS Trainium, Google TPUs, Nvidia GPUs, Fluidstack data centers, and now SpaceX’s Colossus 1. That tells us:

Compute scarcity is still real. If demand were easy to serve, Anthropic would not be stitching together capacity from half the industrial economy.

Anthropic is reducing dependency risk. At frontier scale, relying too heavily on one cloud, chip architecture, or partner is a strategic vulnerability. Redundancy is survival.

Hyperscalers are no longer just cloud vendors. They are the kingmakers, financiers, landlords, and toll collectors of the AI economy. The model labs may get the headlines, but the hyperscalers are turning those headlines into backlog.

OpenAI’s critique was not wrong. Anthropic did need more compute. The mistake was assuming compute scarcity would remain a durable point of differentiation. Anthropic took the criticism as product feedback and responded with the most absurd compute procurement speedrun imaginable.

🎪 GameStop Discovers M&A as Content

GameStop trying to buy eBay makes you wonder whether the market has developed a taste for performance art.

Gamestop, the mall-era video game retailer that became the emblem of the 2021 meme-stock revolt, made an unsolicited offer to buy eBay. It was an almost cartoonishly asymmetric move: a smaller, volatile retailer (~$10B market cap) trying to swallow a far larger, profitable marketplace (~$47B market cap). But CEO Ryan Cohen’s genius, or flaw, is that he turned the bid into internet theater.

Within days, Cohen opened an eBay storefront and began auctioning off personal items and collectibles, joking that he was “selling stuff on eBay to pay for eBay.” The stunt had perfect meme-market symmetry: the man trying to buy the marketplace was using the marketplace to fund the purchase.

Cohen has always understood something many public-company executives do not: attention is a form of capital. In the post-GameStop world, retail investors, online communities, and viral narratives can affect financing, stock prices, and strategic optionality. The eBay storefront was, in that sense, a deal roadshow translated into internet-native language.

But attention is not the same as credibility.

GameStop offered ~$56B for eBay, half in cash and half in GameStop stock. But the math wasn't mathing. GameStop had ~$9B of cash, pointed to a $20B debt commitment, and already owned about 5% of eBay. Even then, there was a multibillion-dollar funding gap. GameStop shares dropped ~10% after Cohen struggled to answer basic questions about the financing.

The stock component only made the problem louder. eBay shareholders would have been asked to take GameStop equity as currency - equity tied to a meme-stock name with a much smaller market cap and a history of violent swings. A dollar of cash is a dollar. A dollar of GameStop stock is a philosophy seminar.

Cohen’s supporters see a founder-investor willing to attack complacency. They see eBay as an under-optimized marketplace with a still-powerful brand and room for operational improvement.

The bear case is simpler: GameStop has not yet convincingly fixed GameStop. Buying eBay would be 4 hard things at once: integration, financing, governance, and credibility. The theatrical eBay auctions may have amplified the bid, but they also made the whole thing look less institutional and less bankable.

And yet, the stunt worked in one respect: everyone talked about it. The bid, the storefront, the screenshots, the jokes - each chapter extended the story’s half-life. That is the brilliance of Cohen’s style. The narrative velocity is undeniable.

The GameStop-eBay affair is a case study in what public-company strategy looks like after meme stocks, founder cults, and social platforms have merged with capital markets. In the end, the bid became indistinguishable from content. Cohen transformed a financing question into a feed event. That may be enough to win attention. It is not yet enough to win eBay.

Love the details 👏